Visual voice activity detection as a help for speech source separation from convolutive mixtures

Product Description

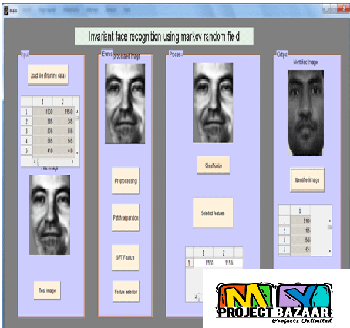

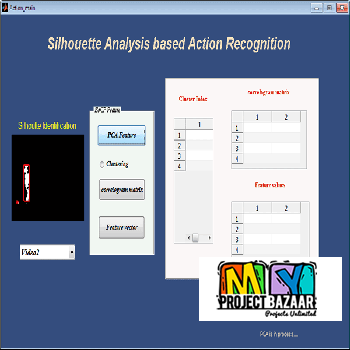

Abstract—Visual voice activity detection as a help for speech source separation from convolutive mixtures. Audio-visual speech source separation consists in mixing visual speech processing techniques (e.g. lip parameters tracking) with source separation methods to improve and/or simplify the extraction of a speech signal from a mixture of acoustic signals. In this paper, we present a new approach to this problem: visual information is used here as a voice activity detector (VAD). Results show that, in the difficult case of realistic convolutive mixtures, < Final Year Projects > the classic problem of the permutation of the output frequency channels can be solved using the visual information with a simpler processing than when using only audio information.

Including Packages

Our Specialization

Support Service

Statistical Report

satisfied customers

3,589

Freelance projects

983

sales on Site

11,021

developers

175+Additional Information

| Domains | |

|---|---|

| Programming Language |

Would you like to submit yours?

There are no reviews yet