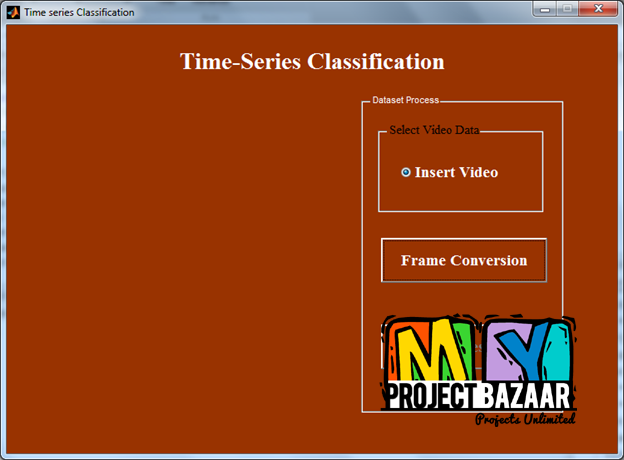

Probabilistic Sequence Translation-Alignment Model for Time-Series Classification

Product Description

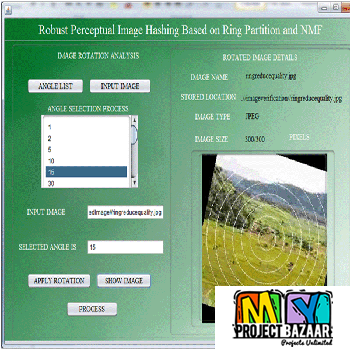

Abstract—Probabilistic Sequence Translation-Alignment Model for Time-Series Classification. We tackle the time-series classification problem using a novel probabilistic model that represents the conditional densities of the observed sequences being time-warped and transformed from an underlying base sequence. We call it probabilistic sequence translation-alignment model (PSTAM) since it aims to capture both feature alignment and mapping between sequences, analogous to translating one language into another in the field of machine translation. To deal with general time-series, < Final Year Projects > we impose the time-monotonicity constraints on the hidden alignment variables in the model parameter space, where by marginalizing them out it allows effective learning of class-specific time-warping and feature transformation simultaneously. Our PSTAM, thus, naturally enjoys the advantages from two typical approaches widely used in sequence classification: 1) benefits from the alignment-based methods that aim to estimate distance measures between non-equal-length sequences via direct comparison of aligned features, and 2) merits of the model-based approaches that can effectively capture the class-specific patterns or trends. Furthermore, the low-dimensional modeling of the latent base sequence naturally provides a way to discover the intrinsic manifold structure possibly retained in the observed data, leading to an unsupervised manifold learning for sequence data. The benefits of the proposed approach are demonstrated on a comprehensive set of evaluations with both synthetic and real-world sequence data sets.

Including Packages

Our Specialization

Support Service

Statistical Report

satisfied customers

3,589

Freelance projects

983

sales on Site

11,021

developers

175+Additional Information

| Domains | |

|---|---|

| Programming Language |

Would you like to submit yours?

There are no reviews yet