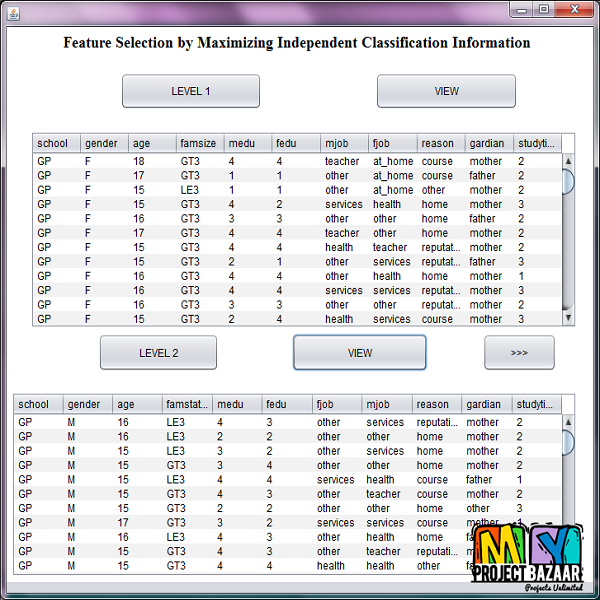

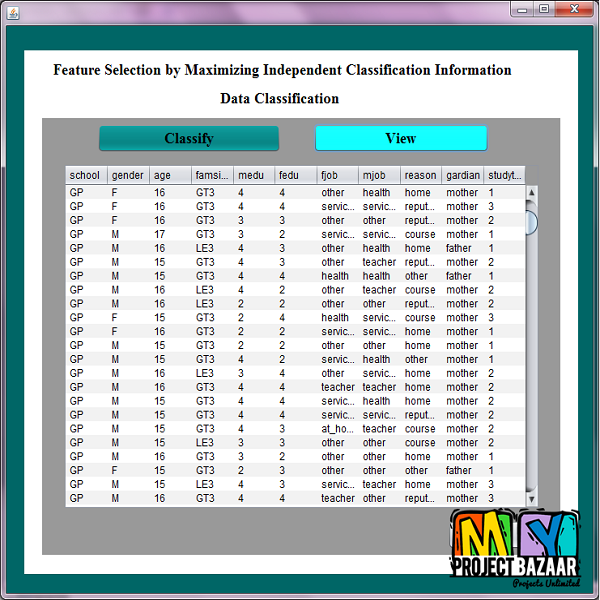

Feature Selection by Maximizing Independent Classification Information

Product Description

Feature Selection by Maximizing Independent

Classification Information

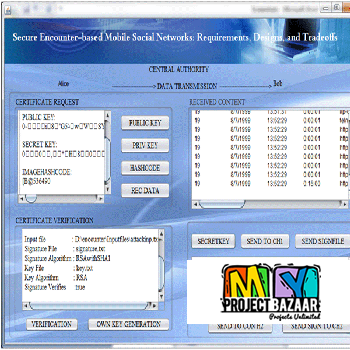

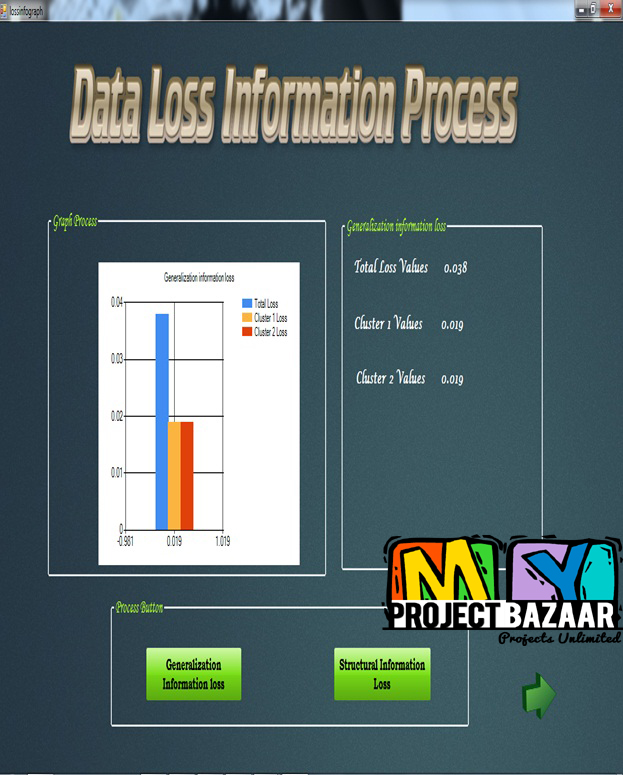

Abstract— Feature selection approaches based on mutual information can be roughly categorized into two groups. The first group minimizes the redundancy of features between each other. The second group maximizes the new classification information of features providing for the selected subset. A critical issue is that large new information does not signify little redundancy, and vice versa. Features with large new information but with high redundancy may be selected by the second group, and features with low redundancy but with little relevance with classes may be highly scored by the first group. Existing approaches fail to balance the importance of both terms. As such, a new information term denoted as Independent Classification Information is proposed in this paper. It assembles the newly provided information and the preserved information negatively correlated with the redundant information. Redundancy and new information are properly unified and equally treated in the new term. This strategy helps find the predictive features providing large new information and little redundancy. Moreover, independent classification information is proved as a loose upper bound of the total classification information of feature subset. Its maximization is conducive to achieve a high global discriminative performance. Comprehensive experiments demonstrate the effectiveness of the new approach < final year projects >

Including Packages

Our Specialization

Support Service

Statistical Report

satisfied customers

3,589

Freelance projects

983

sales on Site

11,021

developers

175+Additional Information

| Domains | |

|---|---|

| Programming Language |