Mining Coherent Topics with Pre-learned Interest Knowledge in Twitter

Product Description

Mining Coherent Topics with Pre-learned Interest Knowledge in Twitter

Abstract-Discovering semantic coherent topics from the large amount of User-Generated Content (UGC) in social media would facilitate many downstream applications of intelligent computing. Topic models, as one of the most powerful algorithms, have been widely used to discover the latent semantic patterns in text collections. However, one key weakness of topic models is that they need documents with certain length to provide reliable

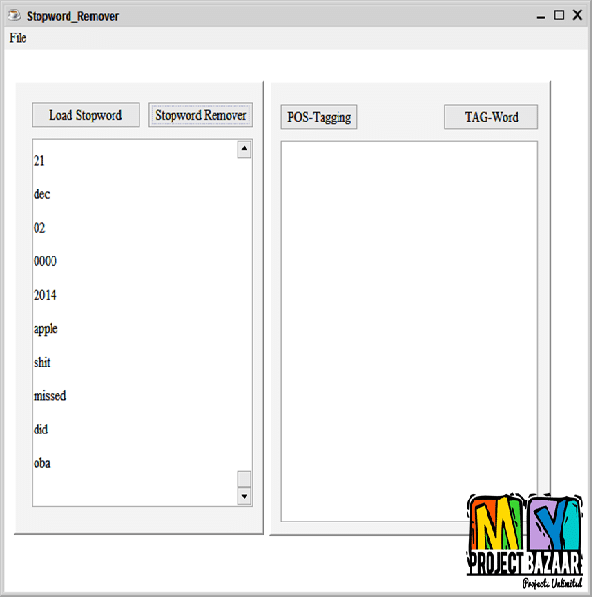

statistics for generating coherent topics. In Twitter, the users’ tweets are mostly short and noisy. Observations of word cooccurrences are incomprehensible for topic models. To deal with this problem, previous work tried to incorporate prior knowledge to obtain better results. However, this strategy is not practical for the fast evolving user generated content in Twitter. In this paper, we first cluster the users according to the retweet network, and

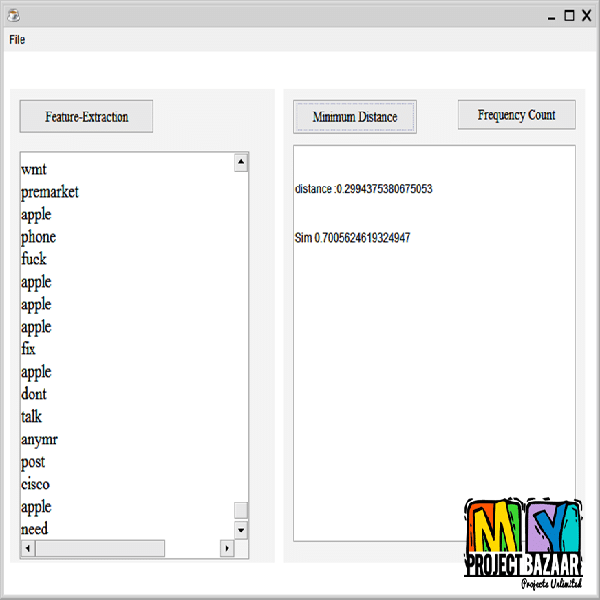

the users’ interests are mined as the prior knowledge. Such data is then applied to improve the performance of topic learning. The potential cause for the effectiveness of this approach is that users in the same community usually share similar interests, which will result in less noisy sub-datasets. Our algorithm pre-learns two types of interest knowledge from the dataset: the interestword-sets and a tweet-interest preference matrix. Furthermore, a dedicated background model is introduced to judge whether a word is drawn from the background noise. Experiments on two

real life twitter datasets show that our model achieves significant improvements over state-of-the-art baselines.

Including Packages

Our Specialization

Support Service

Statistical Report

satisfied customers

3,589

Freelance projects

983

sales on Site

11,021

developers

175+Additional Information

| Domains | |

|---|---|

| Programming Language |